Enterprise MVP Statistics That Reveal the Real Path to Product-Market Fit

Enterprise MVPs can look convincing in a pilot, and still fall apart when teams try to actually scale adoption, validate retention, or move toward a full rollout.

To help product teams make sense of that gap, our Arounda team compiled 30+ MVP development statistics covering timelines, iteration cycles, rollout challenges, and the benchmarks that matter in enterprise environments. Alongside the data, we share product market fit analysis, expert perspective, and enterprise-focused insights to help product leaders interpret early signals more clearly and make stronger product decisions.

Article Key Takeaways

Here you'll find a structured summary of enterprise-grade MVP benchmarks, validation signals, and product-market fit factors.

- 30+ MVP statistics for enterprises covering development timelines, rollout challenges, iteration cycles, and failure patterns.

- Expert thoughts, practical recommendations, and product-market fit metrics for evaluating traction beyond pilot adoption.

- Why startup MVP benchmarks often mislead enterprise product teams.

- Core enterprise benchmarks for fit score, redesign pressure, and the iterative design cycle behind scalable products.

- A ranked view of common MVP failure reasons and the issues teams should address before growth.

Why Startup MVP Benchmarks Mislead Enterprise Product Teams

Startup benchmarks can sometimes oversimplify what an enterprise MVP is expected to prove. Startups focus on speed, getting early traction, and getting quick feedback. On the other hand, enterprise teams have to deal with longer rollout times, more stakeholders, compliance limits, and more complicated patterns of adoption. Because of this, the same benchmarks can give people false confidence, make pilot results seem better than they are, and push teams to scale up before the product is ready.

- Startup products get measured by speed; enterprise teams care more about rollout readiness and workflow fit.

- Success in an early pilot doesn't always mean it will scale across an enterprise.

- Validation signals are slower, weaker, and harder to interpret in enterprise products.

- Startup-style benchmarks can hide redesign risk, internal friction, and scale-up complexity.

Key Numbers at a Glance

- Most enterprises are still stuck in pilot mode:

Nearly two-thirds of respondents say their organizations have not yet begun scaling AI across the enterprise, which shows how often early experimentation fails to turn into broader rollout.

(Source: McKinsey, The State of AI) - Scaled value remains limited:

In 2025, 46% of companies reported productivity impact at scale or said they were already capturing financial impact from AI, up from 33% a year earlier.

(Source: McKinsey, Upgrading Software Business Models to Thrive in the AI Era) - Change management outweighs build costs:

For every $1 organizations spend on model development, they should expect to spend $3 on change management, including engineering support, user training, reinforcement, and performance monitoring.

(Source: McKinsey, Upgrading Software Business Models to Thrive in the AI Era) - Rapid investment creates disconnected systems:

50% of surveyed CEOs report that rapid investment has resulted in disconnected technology within their organization.

(Source: IBM: CEOs Double Down on AI While Navigating Enterprise Hurdles) - Scaled deployment is still far off for most organizations:

Organizations that lead with the right use cases, embed governance early, and demand measurable outcomes are making steady progress. Most, however, remain 12–18 months away from scaled deployment.

(Source: Forrester, The Copilot Reality Check)

Core enterprise MVP benchmarks: timeline, fit score, failure rate, iteration cycles

How to read these numbers:

These benchmarks show that enterprise MVPs move from pilot to scale more slowly than early traction often suggests. A realistic MVP timeline includes rollout friction, process changes, and adoption work before the product creates broader business value. They also show that a strong product market fit score in enterprise settings depends on measurable impact, process fit, and rollout readiness, not early usage alone.

Arounda team suggests:

- Before the pilot starts, make plans for the rollout. Early on, set clear rules for ownership, enablement, governance, and workflow changes to avoid delays later.

- Keep the MVP small and based on results: to prove value faster, focus on one workflow, one user group, and one measurable result.

- Plan for rework from the start: enterprise products often need process changes before they can be scaled, so the timeline and staffing should reflect that from the start.

Enterprise MVP Development Timeline Statistics

- A 252-user enterprise pilot took three months to launch:

The composite organization's initial effort to implement and integrate Copilot for Sales and to conduct a pilot with 252 users takes three months and requires three full-time equivalent (FTE) resources.

(Source: Forrester TEI, The Total Economic Impact of Microsoft Copilot for Sales) - Initial rollouts started with 10% of users and took up to four months:

Interviewees said their organizations' initial implementations of Copilot for Sales typically started with a short pilot covering around 10% of users. After that, the rollouts took anywhere from one month to four months. The survey revealed an average initial deployment of three months.

(Source: Forrester TEI, The Total Economic Impact of Microsoft Copilot for Sales) - Enterprise product teams have a three- to six-month window to define direction:

C-level executives at software organizations have a crucial three- to six-month window to define their agentic AI product strategy, as the industry is at an inflection point.

(Source: Gartner, Gartner Predicts 40 Percent of Enterprise Apps Will Feature Task-Specific AI Agents by 2026) - One quarter is becoming the new benchmark for early measurable impact: BCG advises organizations to expect measurable results within a quarter and to hold end-to-end implementation to the same faster standard.

(Source: BCG, Rethinking Vendor Strategy: Age of AI Acceleration) - A typical MVP takes 12 to 18 weeks to reach pilot readiness:

Based on Arounda's experience across 180+ enterprise projects, a typical enterprise timeline for an MVP ranges from 12 to 18 weeks, including discovery, design, development, and pilot preparation.

(Source: Arounda, internal benchmark)

How to read these numbers:

MVP development for enterprises is moving toward smaller first launches, tighter pilot groups, and earlier rollout planning. Teams need cleaner validation, fewer dependencies, and less delivery risk before wider deployment starts. That shift is changing the MVP development timeline itself. More effort now goes into integration, pilot structure, and rollout readiness before expansion. Stronger MVP development depends on how well the first release is prepared for real operating conditions inside the business.

Arounda team suggests:

- Keep the first pilot small. One team, one workflow, and one clear metric give better signals and fewer rollout issues later.

- Bring integration decisions forward. Enterprise timelines usually slip when systems, permissions, and dependencies surface too late.

- Prepare for rollout during the build. Ownership, training, and success criteria should be ready before the pilot ends.

Product-Market Fit Statistics in Enterprise Context

- Experimentation still far outpaces real business value:

While 88% of organizations are now experimenting with AI, 81% do not report any meaningful bottom-line gains.

(Source: McKinsey, The State of Organizations 2026) - Only 12% of CEOs say AI has delivered both cost and revenue benefits:

Only one-in-eight (12%) CEOs say AI has delivered both cost and revenue benefits.

(Source: PwC, PwC 2026 Global CEO Survey) - Fewer than 60% of workers with access use AI in their daily workflow:

Among those workers with access, fewer than 60% use it in their daily workflow, a pattern that remains largely unchanged from last year.

(Source: Deloitte, The State of AI in the Enterprise 2026) - 2–3x deeper product embedding among companies with measurable gains:

CEOs reporting both cost and revenue gains are two to three times more likely to say they have embedded AI extensively across products and services.

(Source: PwC, 2026 Global CEO Survey)

How to read these numbers:

Many enterprise products get into pilot mode before they can provide real day-to-day value. So as you start looking at how to measure product market fit, look for signs that point to growth: repeated use inside real workflows, expansion beyond the first pilot group, and some clear operational/financial results. If you're not seeing those signs early on, slow down expansion, tighten the use case, and resolve any adoption gaps before scaling much further.

Arounda team suggests:

- Set PMF criteria before launch: define expected daily usage, post-pilot expansion, and one business outcome the product must improve.

- Review pilot results through real workflow usage and measurable value, so active accounts do not distort the picture.

- Expand only the use cases that keep teams engaged in production and continue showing visible value after initial access.

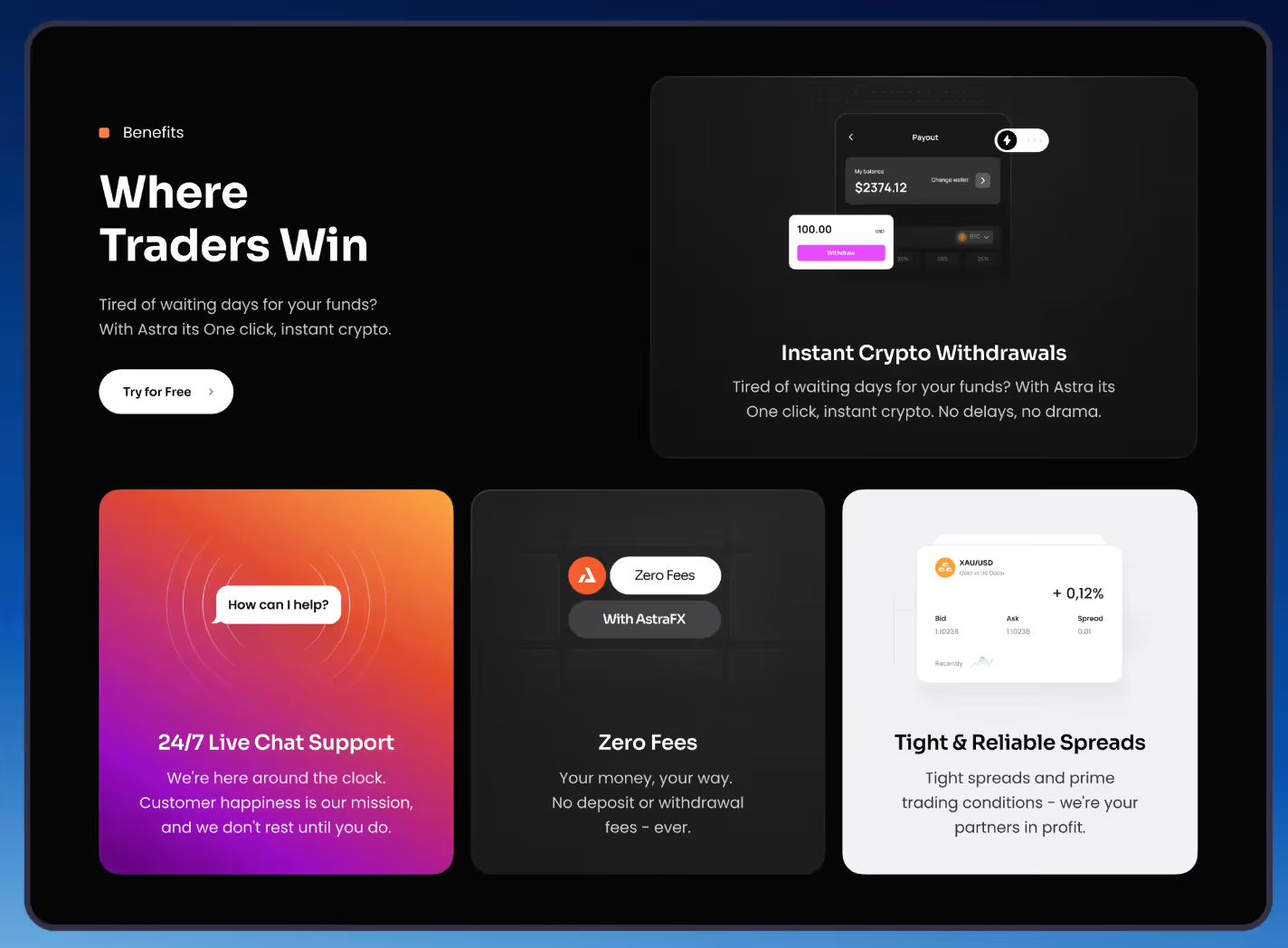

A good example of a focused enterprise-style MVP that can create clearer fit signals early is Astra. Astra Capital, a forex platform, came to Arounda looking for a modern product and web experience that could build trust, stand out in the competitive Web3 space, and remain easy for traders to navigate. Over a five-month collaboration, our Arounda team delivered an MVP and web development, along with website design, creating a cleaner structure, more intuitive navigation, responsive layouts, and a design system built for consistency.

The results included stronger user engagement, improved brand recognition, higher conversions through better wallet connection flows, and faster access to live support.

Enterprise Adoption Pressure Across Industries

- SaaS: Compared to the change experienced throughout their organizations in 2025, Software and Platforms leaders expect an 88% higher level of change in 2026.

(Source: Accenture, Pulse of Change) - Healthcare: Compared to the change experienced throughout their organizations in 2025, Health leaders expect an 82% higher level of change in 2026.

(Source: Accenture, Pulse of Change) - Fintech: 57% of banking IT executives expect broad or fully embedded AI agent adoption in risk, compliance, and fraud detection within three years.

(Source: Accenture, Top Banking Trends for 2026) - AI / ML: 32% of C-suite leaders use AI tools daily in their work, up from 8% in March 2024.

(Source: Accenture, Pulse of Change)

How to read these numbers:

The information above shows that pressure to adopt is growing in all industries, but it happens in different ways. Leaders in SaaS and healthcare expect a lot more change by 2026. This means that workflows, delivery priorities, and product expectations will change more quickly. In the world of fintech, the pressure is shifting to important operational areas like fraud detection, compliance, and risk. In AI and ML, daily use by executives shows that these tools are already becoming a normal part of making decisions.

Arounda team suggests:

- SaaS: Keep rollout cycles short, check usage often, and update onboarding early because expectations for products in this area can change quickly.

- Healthcare: Before a wider rollout starts, make sure the product fits with real workflows, training needs, and operational limits to make it easier for people to use it.

- Fintech: Start with a single, well-defined use case in a core function. Only expand once the product has shown that it can work consistently in regulated settings.

- AI/ML: Keep a close eye on daily use and only move features that are still being used in real decision-making to a wider audience.

MVP Iteration and Redesign Statistics

- Rollout maturity remains extremely low: 8% of organizations are in the nascent stage, 39% are emerging, 31% are developing, 22% are expanding, and only 1% of C-suite respondents describe their gen AI rollouts as mature.

(Source: McKinsey, Superagency in the Workplace) - One-off pilots still create rework instead of scalable systems: There are few disincentives for building pilots and one-off initiatives over building for scale, leading to rework and solutions that cannot be broadly applied. It also reports that only 10% to 20% of isolated AI experiments in the past two years scaled to create value.

(Source: McKinsey, The New Economics of Enterprise Technology in an AI World) - Only 30% are redesigning key processes around AI: Deloitte reports that only 30% of organizations are redesigning key processes around AI, while 37% still use AI at a surface level with little or no change to underlying business processes.

(Source: Deloitte, State of AI Report 2026) - Reducing technical debt can improve ROI by up to 29%: Paying down technical debt from legacy systems can improve AI ROI by up to 29% because it reduces friction and rework.

(Source: IBM, How to Maximize AI ROI in 2026) - Technical debt can add 15% to 22% to schedules: Technical debt can add 15% to 22% to schedules, turning 30-month implementations into 36-month implementations.

(Source: IBM, A Practical Approach to Boosting Your AI ROI) - Around one-third of digital products revisit core flows after the first release: Based on Arounda's experience across 10+ years and 350+ completed projects, around one-third of digital products revisit core flows or interface structure after the first release, once real usage patterns and internal workflow constraints become visible.

(Source: Arounda, internal benchmark)

How to read these numbers:

Enterprise MVPs rarely move from pilot to scale in a straight line. Most teams are still working through immature rollouts, limited process redesign, and technical debt that slows implementation and creates rework. In practice, an iterative design process diagram for enterprise products should not end with the first launch. It should include workflow adjustment, technical cleanup, and rollout stabilization, because real operating conditions usually expose issues that early pilots do not catch.

Arounda team suggests:

- Plan one post-launch refinement cycle from the start, because enterprise products often reveal structural issues only after teams begin using them in real workflows.

- Review tech debt before wider rollout, since unseen friction in the system can embiggen timelines and rework all across the next phases of delivery.

- Use the pilot as a pressure test for workflows, not just features, so redesign decisions are in the context of how the product fits (and sometimes doesn't fit) inside the business.

Enterprise MVP Failure Statistics

- Only 30% of companies fully meet timeline, budget, and scope expectations: Only 30% of companies fully meet their timeline, budget, and scope expectations in large-scale tech program implementations.

(Source: BCG Platinion, Why 70% of Transformations Miss the Mark and How to Fix Them) - 83% of transformation leaders miss their targets at least half the time: A staggering 83% of leaders overseeing transformation initiatives report that they fail to meet their objectives at least half the time.

(Source: Kearney, 2025 Transformation Study) - 70% of ERP initiatives fail to meet original business use case goals: Gartner reports that 70% of recently implemented ERP initiatives will fail to fully meet their original business use case goals, leading to widespread failure.

(Source: Gartner, Enterprise Resource Planning) - Two-thirds of executives cite resistance to change as the biggest obstacle: Among the executives surveyed, two-thirds cited resistance to change as their biggest obstacle, followed by senior-leader misalignment and organizational fatigue.

(Source: Kearney, 2025 Transformation Study)

How to read these numbers:

These numbers show how easily enterprise initiatives lose momentum once delivery gets complicated. When the scope keeps growing, decisions take longer, and rollout meets resistance within the business, teams miss their goals. For enterprise MVPs, the message is clear: a product can look good at first but fail later if the rollout isn't handled carefully.

Here’s what one of our expert designers says about where enterprise MVPs often go wrong:

“A common mistake is building an MVP around the end user alone while overlooking the priorities of the buyer. From the start, the product should signal value to stakeholders through clear ROI potential, security readiness, and alignment with internal standards.”

Yevhen, UI/UX designer

Arounda team suggests:

- Keep the launch scope small, with one business problem, one user group, and one success threshold that leadership all agree to before rollout starts.

- Get everyone who makes decisions on the same page early on and check progress against business goals during delivery to make sure the product stays true to its original purpose.

- Think about change management as part of execution from day one, and plan to make sure adoption friction, internal fatigue, and workflow resistance don't break the rollout even when the product itself is good.

Enterprise MVP failure reasons ranked by frequency

MVP to Growth And What the Data Shows

Growth rarely comes from a promising pilot alone. It usually follows stronger rollout logic, clearer adoption signals, and a product structure that can hold up under real operating conditions. In practice, enterprise MVP development creates better growth outcomes when teams connect early validation with workflow fit, technical readiness, and a realistic path to scale.

A good example of how MVPs work to support later growth is GT Protocol. GT Protocol, an AI-powered investment platform, came to Arounda looking for a product experience that could simplify crypto investing, stay consistent across platforms, and remain accessible even for users with no crypto background.

Our Arounda team delivered branding, UI/UX design, website design, web development, and MVP work, creating a cleaner structure, smart onboarding, modular layouts, interactive tooltips, and a responsive dark UI.

The results included 2x client base growth, an 88% user satisfaction rate, +52% growth in community, and a 3.5x increase in engagement.

What These Statistics Mean for Enterprise Product Strategy

Enterprise product strategy breaks down when teams treat early rollout as proof of long-term success. Stronger decisions come from tighter market fit analysis, clearer adoption criteria, and earlier preparation for scale, process change, and delivery pressure.

- Establish success criteria before rollout begins, including how deeply the product should be used, what workflow it must fit, and what specific business result a product improvement is expected to drive.

- Scope the first release tightly so your team can see where the product fits, where friction crops up, and what needs to change and adapt on this front before it's more widely deployed.

- Plan for scale earlier by thinking through product strategy alongside integration, change management, and redesign requirements for the MVP.

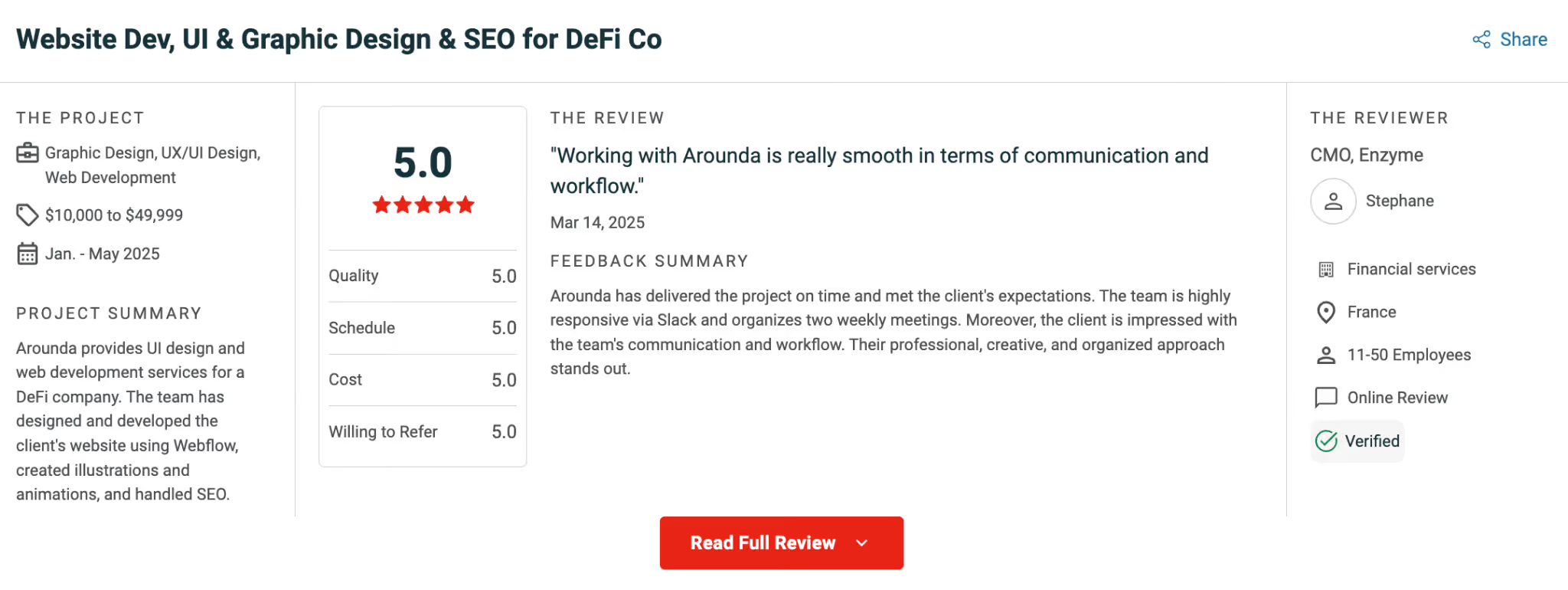

Good development and strategic design help enterprise teams turn an MVP into a product that can scale with less friction and deliver stronger business results. With 10+ years of experience and work across 180+ enterprise products, Arounda knows how to build digital experiences that support adoption, clarity, and long-term growth. That expertise is reflected in the feedback we receive from clients. Here is what one of them said about working with us.

Summary

Enterprise MVPs follow a different path than startup MVPs, and the statistics in this article make that clear. In large organizations, rollout pressure, adoption depth, workflow fit, and business value matter far earlier than teams expect. If you want an MVP that can gain traction, scale cleanly, and support real business outcomes across your company, contact us.

Table of contents

FAQ

It happens when the product stops helping the team learn and starts slowing down every next decision. You usually see it when simple changes take too long, product logic is full of exceptions, and teams keep protecting old shortcuts because fixing them feels too expensive.

Enterprise products take longer because the adoption process involves approvals, integrations, and process change, and people need to trust the product before they start using it regularly. Once it slots into day-to-day operations, the retention is often stronger because people are relying on the product to get their work done.

In that case, the score shows post-adoption value, not pure demand. The useful question is simple: after rollout, do people keep using the product because it helps them, or only because they have to? That is why teams should look at repeat usage, feature depth, completion rates, and whether users stay active when support is reduced.

Look at what happens when pressure drops. If usage stays stable, spreads across teams, and grows into broader workflows, that is a healthy sign. If people only complete the required actions and disappear, the fit is weak.

When each new version keeps piling on complexity and never addresses the fundamental issues. If the architecture isn't stable, if the workflows aren't right, if the product continues to lean towards hacky workarounds, another try to fix things can just create bigger problems for you later on. It's often better to start from scratch.

Because pilots are controlled, rollouts are messy. A pilot may involve a small group, extra support, cleaner data, and a narrower workflow. Full rollout exposes everything at once: training gaps, integration issues, inconsistent usage, security concerns, team resistance, and edge cases nobody saw earlier. A pilot can show that the product works in a limited setting. It does not guarantee the product will hold up across the whole organization.

89+ Reviews

on Clutch

Top Rated Plus Agency

on Upwork

Top 50 Trending team

on Dribbble

Projects are Featured on Behance platform